Unleash the Crazy Ones

The internet enabled humans to share videos that TV would never air. AI will enable them to share tools that companies would never build.

Is it possible to stop writing about AI? Not anytime soon. I hope you're as excited and fascinated as I am by all the recent announcements. This week, Google announced a new partnership with Replit to help programmers write code faster — or stop it altogether. And while things are moving quickly, more than 1,000 tech leaders published an open letter calling for a 6-month pause on training advanced AI models. The signatories, including Elon Musk and Steve Wozniak, suggest using these months to determine how AI should be regulated.

In contrast, Tyler Cowen believes that a few months won't make a difference — not because he thinks we'll be fine, but because he believes we have no way of knowing how things will unfold. For the first time, we are living through "moving history." And if history is any guide, people — both optimists and pessimists — cannot predict the medium and long-term effects of transformative technology. And so, we might as well brace ourselves and dive headlong into the stream.

The reality is that no one at the beginning of the printing press had any real idea of the changes it would bring. No one at the beginning of the fossil fuel era had much of an idea of the changes it would bring. No one is good at predicting the longer-term or even medium-term outcomes of these radical technological changes (we can do the short term, albeit imperfectly). No one...

Given this radical uncertainty, you still might ask whether we should halt or slow down AI advances. “Would you step into a plane if you had radical uncertainty as to whether it could land safely?” I hear some of you saying...

I would put it this way. Our previous stasis, as represented by my #1 and #2, is going to end anyway. We are going to face that radical uncertainty anyway. And probably pretty soon. So there is no “ongoing stasis” option on the table.

If I understand him correctly, Tyler's core argument is that unilaterally slowing down our AI research will come at a high cost. And we're very likely to get nothing in return. AI will continue to develop, and we'll have to contend with its effect whether we like it or not, so we might as well stay ahead of everyone else.

I reached the same conclusion, albeit via a different path. We should not stop. Not because it's a bad idea. But because China and other countries are racing ahead in developing their systems. In addition, America's tech giants don't know how to stop, and many AI models are open source and beyond the control of any specific company. So, we can only pretend to stop, but we can't really stop.

Major craziness is coming. Most of it will not involve the end of the world. Most of it will be quite fun. Or so I think. I wrote about that in passing earlier this week, but I would like to elaborate and refine my point.

In Is Your Job Safe? I pointed out that we've reached a point when "a single person with an idea can now build software without knowing how to code."

Humanity was granted a host of new superpowers. And everyone gets to play. For software, it's a new situation. But its implications are familiar. When Instagram enabled everyone to become a "pro photographer," the result was an explosion of new photographers and new businesses built on the back of popular Instagram feeds. Some photographers lost their jobs, but most of them and many others could suddenly make money from photography. And a tiny minority of Instagram "posters" became wealthier than any photographer ever was.

The same thing will happen with software. Millions of people will launch new apps and software-powered projects. Most of them will never make a buck. Some will suddenly make a living from "software" even though they can't code. And some will become wealthy beyond belief — as software eats even more of the world.

The "photographer" analogy was not accurate. Let me put it differently. 13 years ago, anyone who wanted to produce a nice photo or video had to procure the services of an expert (or become one). Likewise, anyone who wanted to distribute high-quality images and videos needed to rely on existing publishing and deal with high costs. High-speed internet, high-quality mobile cameras, and high-powered processors and software changed everything.

As a result, technical competence lost its power to constrain the flow of content. Anyone with a crazy idea could suddenly take a selfie or record a quick video. And that photo or video suddenly had the chance of "taking over the world." And some of them did — from Gangnam Style to Mr. Beast to Donald Trump, the economy was taken over by people that did not ask anyone's permission and did not meet the editorial standards of the previous era. It worked so well that these freaks managed to force traditional media institutions to lower their standards.

Showbiz is showbiz. But what will the world look like when the same dynamic plays out in other, more serious fields? It's starting to happen.

In this (paywalled) interview on the Stratechery podcast, Daniel Gross highlights the evolution of technology ecosystems:

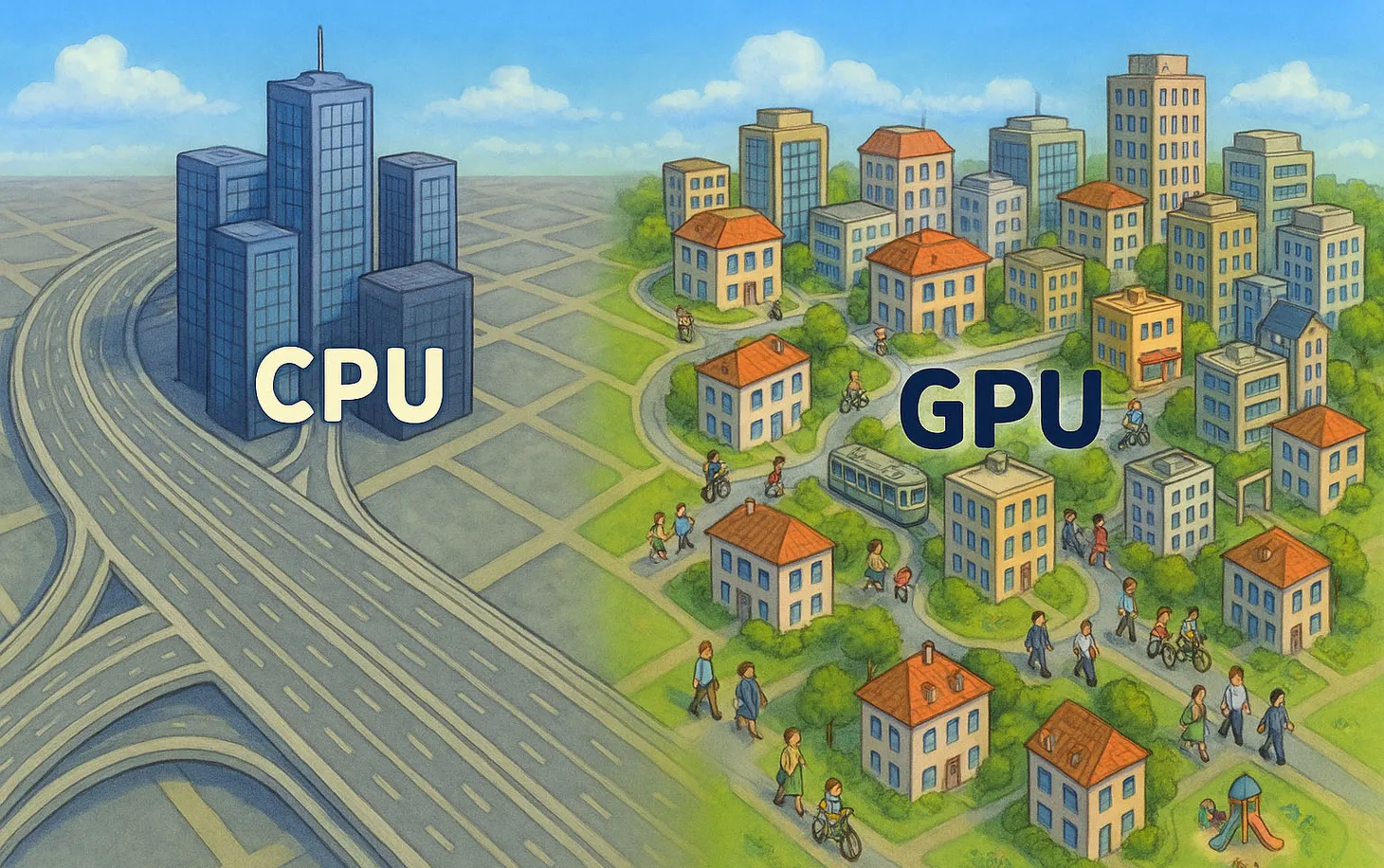

We're in this era where fine-tuning a raw model and making something that you can converse with and has a personality. That is actually a design problem — not a hardcore engineering problem. But there are no design tools for it yet. These will emerge over time [and then the] people that have the design sensibility will be able to write instructions to those models. So [at the moment] you end up having a very small number of people that can live in both worlds and do both.

It is very reminiscent of the very early days of iOS when there were very few people who knew how to make really polished apps but also knew Objective C. The companies that had it had a real edge. Now with React Native, designers can build beautiful apps, and apps just get more beautiful.

In the beginning, all products are made up and designed by people with the technical prowess to actually build them. But over time, new tools make it easier for designers and creatives to launch and shape products. Usually, it takes years for this to happen, if it happens at all. For websites and apps, it took about twenty years. And most designers are still quite limited in their ability to turn ideas into digital products or even in understanding the constraints and trade-offs involved in translating an idea into a product. In other words, even on the internet, creative people were still quite constrained. They could publish videos and photos, and blog posts. But they couldn't build actual tools.

Now, they can. AI will do to software what Instagram and TikTok did to photos and videos. They will enable every crazy person with an idea to launch their own "machine." What is the software equivalent of Gangnam Style or Donald Trump? I don't know, but we're about to find out.

This ties back to Productivity and Bullshit:

Whatever you are working on is valuable. Whatever you are experimenting with contributes to a complex process that, somehow, pushes all of humanity one step further. Like ants walking in different directions in the hope of finding food, one of us will find it, and the network that connects us will enable all of us to benefit.

The ants (that's us!) are now able to run and fly and zigzag in lots of new directions. What they discover and invent is going to be spectacular.

Have an excellent weekend.

Best,

Dror Poleg Newsletter

Join the newsletter to receive the latest updates in your inbox.